A crypto founder had his laptop computer compromised when he joined what seemed to be a Microsoft Groups name with Pierre Kaklamanos, a Cardano Basis contact he had spoken with earlier than.

When “Pierre” reached out about Atrium and despatched a Groups invite, nothing seemed misplaced. On the decision, the face and voice matched what he remembered, and two different obvious basis members had been current.

When the decision lagged and dropped him, a immediate instructed him his Groups software program was old-fashioned and wanted reinstalling via Terminal. He ran the command, then shut the laptop computer off as a result of the battery was dying, which restricted the injury on reflection.

He describes himself as “fairly technically savvy,” which is a part of the purpose that the assault labored as a result of the context felt respectable.

Social engineers have all the time relied on familiarity, and executing that at scale as soon as required both a compromised account or weeks of text-based rapport-building.

The video name was the authentication layer, the factor victims discovered to belief, and replicating it’s now inside attain.

Faux replace

Microsoft documented campaigns in February and March 2026 during which malicious information masqueraded as office apps, resembling msteams.exe and zoomworkspace.clientsetup.exe, with phishing lures that mimicked respectable Groups and Zoom assembly workflows.

In a separate warning, Microsoft described “ClickFix”-style prompts concentrating on macOS customers, instructing them to stick instructions into Terminal and concentrating on browser passwords, crypto wallets, cloud credentials, and developer keys.

The pretend Groups replace matches each patterns concurrently.

Google Cloud’s Mandiant unit described a crypto-focused intrusion constructed on the identical construction. A compromised Telegram account, a spoofed Zoom assembly, what witnesses described as a deepfake-style govt video, and troubleshooting instructions that launched the an infection.

Mandiant mentioned it couldn’t independently confirm which AI mannequin, if any, generated the video, however confirmed the group used pretend conferences and AI instruments throughout social engineering.

On Apr. 24, the true Pierre Kaklamanos posted on X saying his Telegram had been hacked and that somebody was impersonating him, together with “just a few different folks within the trade this week.”

He instructed followers to keep away from clicking hyperlinks or reserving conferences via the account and to confirm contact via LinkedIn direct messages.

By then, the founder had already messaged the account suggesting they swap to Google Meet. Whoever managed Pierre’s Telegram account replied that he had gotten busy and requested to reschedule, with the attacker nonetheless managing the persona as soon as the decision ended.

That alternate turns the incident from an remoted embarrassment right into a stay marketing campaign sign that the tactic is energetic, the account compromise is the entry level, and the connection historical past is the weapon.

| Stage | What the sufferer noticed | Why it seemed respectable | What the attacker was doubtless making an attempt to realize |

|---|---|---|---|

| Preliminary outreach | “Pierre” reached out about Atrium and prompt a name | The sufferer had spoken with Pierre earlier than, together with on video | Reopen an current belief relationship as an alternative of ranging from a chilly strategy |

| Assembly setup | A Microsoft Groups invite for the following day | Groups is a traditional enterprise workflow and the subject was believable | Transfer the goal right into a managed setting that felt routine |

| Stay name | Acquainted face, acquainted voice, plus two different obvious Cardano Basis members | The social context matched the sufferer’s reminiscence of prior interactions | Decrease suspicion and make the decision itself really feel like verification |

| Name disruption | Lagging, instability, then getting kicked out | Technical glitches are frequent in video calls | Create frustration and arrange the pretend “repair” as a traditional troubleshooting step |

| Faux replace immediate | A message saying Groups was old-fashioned and wanted reinstalling via Terminal | Software program replace prompts are acquainted, and the consumer hardly ever used Groups | Get the sufferer to execute a malicious command straight |

| Command execution | The sufferer ran the command, then shut down the laptop computer as a result of the battery was dying | The workflow nonetheless felt like a routine app repair at that second | Launch the an infection chain and achieve entry to credentials or gadget knowledge |

| Put up-call follow-up | The sufferer prompt switching to Google Meet; the attacker mentioned he acquired busy and requested to reschedule | The persona continued behaving like an actual contact after the failed try | Maintain the connection alive for one more try and keep away from fast suspicion |

Why generative media adjustments the menace floor

The founder mentioned he now believes the decision could have concerned AI-generated or manipulated video. Forensic affirmation of the instruments is missing, and the OpenAI connection right here is ruled by its personal security documentation.

OpenAI launched its 4o picture era mannequin on Mar. 25, describing it as able to “exact, correct, photorealistic outputs,” and launched the ChatGPT Photos 2.0 System Card on Apr. 21.

The agency acknowledged that the mannequin’s “heightened realism” may, absent safeguards, allow extra convincing deepfakes of actual folks, locations, or occasions. One of many main AI labs has now placed on report that its personal picture mannequin raises the ceiling on what a convincing pretend can seem like.

The World Financial Discussion board mentioned in January 2026 that generative AI lowers the barrier to phishing whereas elevating its credibility, via lifelike deepfake audio and video that may evade each detection methods and human scrutiny.

INTERPOL declared monetary fraud one of many world’s most extreme and quickly evolving transnational crimes in March 2026, figuring out deepfake movies, audio, and chatbots as instruments that make impersonation of trusted folks simpler to hold out at scale.

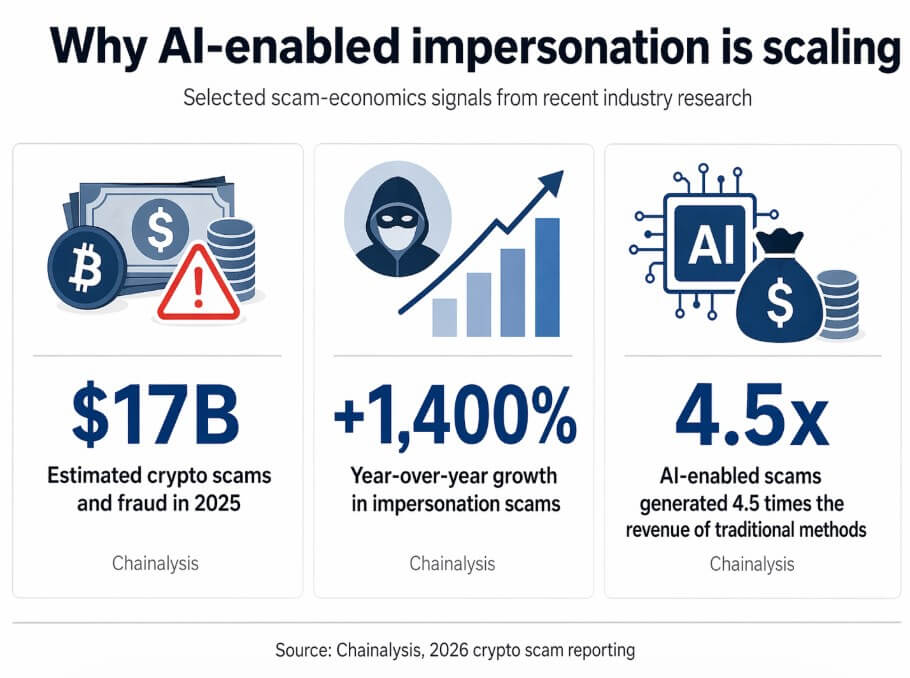

Chainalysis estimated that crypto scams and fraud reached $17 billion in 2025, with impersonation scams up 1,400% 12 months over 12 months and AI-enabled scams producing 4.5 occasions as a lot income as conventional strategies.

Crypto attracts this class of assault as a result of it combines high-value targets, quick settlement rails, and an off-the-cuff communications tradition during which Telegram introductions and advert hoc video calls between founders are routine.

Mandiant documented that the group behind the crypto Zoom intrusion focused software program corporations, builders, enterprise corporations, and executives throughout funds, brokerage, staking, and pockets infrastructure.

Mandiant famous that the sufferer’s knowledge may very well be used to seed future social engineering, with every compromise producing materials for the following.

Two paths ahead

Zoom introduced on Apr. 17 a partnership so as to add real-time human verification to conferences, a “Verified Human” badge, and a “Deep Face Ready Room,” treating participant authenticity as a product downside.

Gartner predicts that by 2027, 50% of enterprises will put money into disinformation-security merchandise or TrustOps methods, up from lower than 5% at the moment.

Within the bull case, that buildout reaches important mass shortly sufficient that attackers should defeat a number of unbiased belief layers to finish a conversion, and the economics of impersonation campaigns deteriorate.

Within the bear case, the timeline compresses earlier than defenses do. Gartner warned that AI brokers could halve the time required to take advantage of account takeovers by 2027, narrowing the window for human hesitation or safety crew intervention.

Deloitte estimated that generative AI-enabled fraud losses within the US alone may climb from roughly $12 billion in 2023 to $40 billion by 2027.

| State of affairs | What adjustments | What stays susceptible | Implication for crypto corporations |

|---|---|---|---|

| Bull case | Verification instruments unfold shortly: human-verification badges, liveness checks, stronger inner belief rails, and extra formal approval workflows | Casual founder-to-founder chats, legacy messaging habits, and advert hoc scheduling nonetheless create openings | Attackers face extra friction and decrease conversion charges as a result of they have to defeat a number of belief layers as an alternative of 1 |

| Bear case | AI-generated impersonation improves sooner than defenses are adopted; pretend conferences and pretend troubleshooting turn into customary playbooks | Public-facing executives, Telegram-based outreach, video-first verification habits, and employees underneath time strain | Relationship hijacking turns into routine, and every compromise creates materials for the following rip-off |

| What success appears to be like like | Delicate requests get verified throughout separate channels, with identified numbers, shared passphrases, {hardware} keys, or pre-agreed inner methods | Social strain, urgency, and belief in acquainted faces and voices can’t be absolutely eliminated | Corporations scale back the possibility that one spoofed name can lead on to compromise |

| What failure appears to be like like | Groups depend on the decision itself as proof of identification, whilst deepfake and impersonation instruments enhance | Video stays persuasive even when it’s not dependable as authentication | Crypto organizations turn into simpler to focus on as a result of executives are each high-value victims and reusable lure belongings |

Each public-facing crypto govt turns into each a goal and a lure asset, a supply of voice recordings, video clips, and relationship graphs that attackers can deploy in opposition to the following sufferer.

Zoom is constructing liveness checks into conferences, Microsoft is documenting assault chains that impersonate its personal software program, and the FBI has warned that malicious actors are already utilizing AI-generated voice and textual content to impersonate trusted contacts, advising in opposition to assuming a message is genuine as a result of it seems to come back from a identified individual.

Verification now requires unbiased rails, resembling a identified cellphone quantity, a {hardware} key, a shared passphrase established earlier than any assembly, or a pre-agreed inner channel that no attacker has accessed.